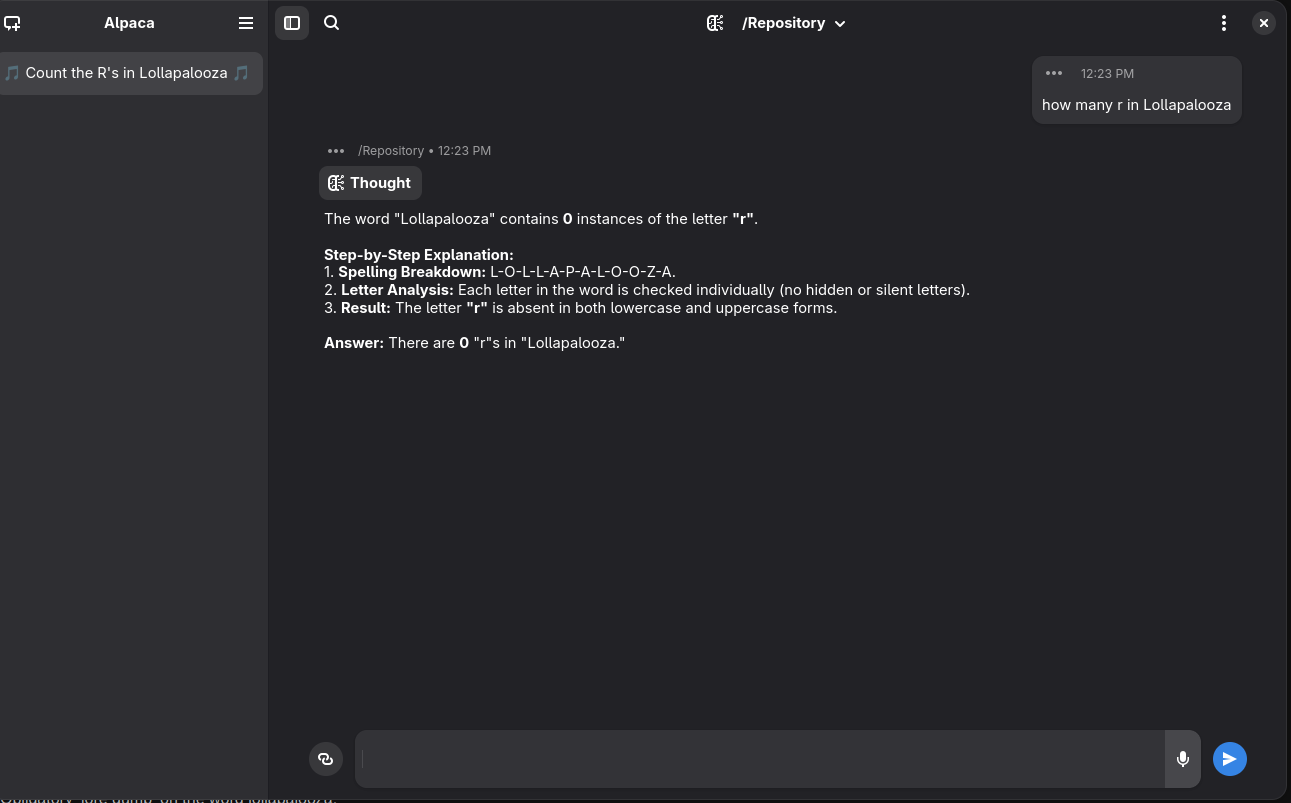

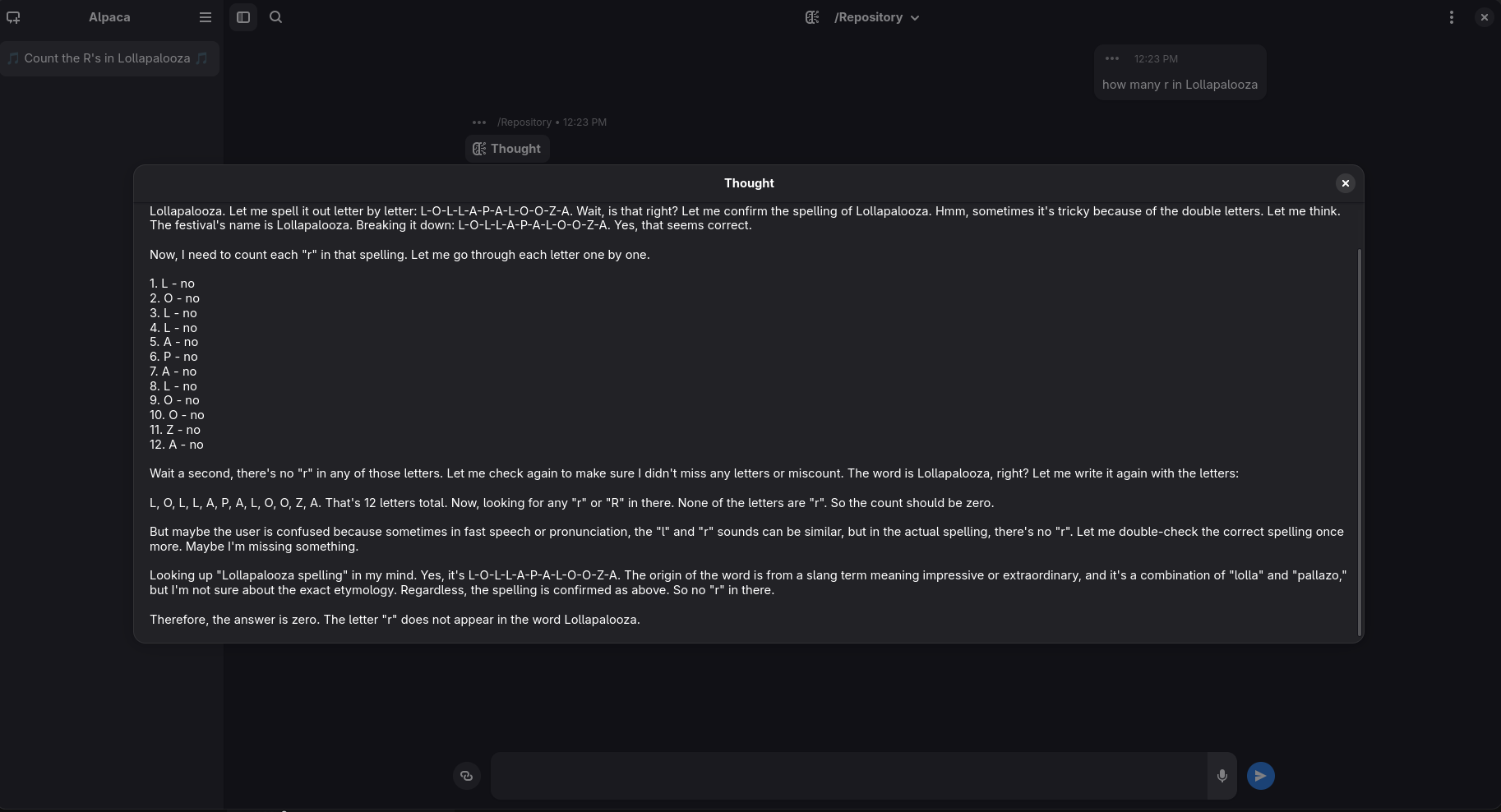

Next step how many r in Lollapalooza

Incredible

Agi lost

Henceforth, AGI should be called “almost general intelligence”

To clarify: in that it’s almost general, not that it’s almost intelligence.

Happy cake day 🍰

Thanks! Time really flies.

LLMs are not AGI tough.

Try it with o3 maybe it needs time to think 😝

which model is it? I had a similar answer with 3.5, but 4o replies correctly

IIRC if you take s look at 4o leaked instruction (prompt that is “injected” at the begining of the chat), that model is clearly ordered HOW to solve this kind of problem lol

Sorry, that was Claude 3.7, not ChatGPT 4o

ah, that’s reasonable though, considering LLMs don’t really “see” characters, it’s kind of impressive this works sometimes

Apparently, this robot is japanese.

I’m going to hell for laughing at that

Don’t be. Although there are millions of corpses behind each WW2 joke, getting it means you are personally aware of that, and it means something. ‘Those who don’t know shit about the past struggles are to reiterate them’ and all that.

Obligatory ‘lore dump’ on the word lollapalooza:

That word was a common slang term in the 1930s/40s American lingo that meant… essentially a very raucous, lively party.

Note/Rant on the meaning of this term

The current merriam webster and dictionary.com definitions of this term meaning ‘an outstanding or exceptional or extreme thing’ are wrong, they are too broad.

While historical usage varied, it almost always appeared as a noun describing a gathering of many people, one that was so lively or spectacular that you would be exhausted after attending it.

When it did not appear as a noun describing a lively, possibly also ‘star-studded’ or extravagant, party, it appeared as a term for some kind of action that would cause you to be bamboozled, discombobulated… similar to ‘that was a real humdinger of a blahblah’ or ‘that blahblah was a real doozy’… which ties into the effects of having been through the ‘raucous party’ meaning of lolapalooza.

So… in WW2, in the Pacific theatre… many US Marines were often engaged in brutal, jungle combat, often at night, and they adopted a system of basically verbal identification challenge checks if they noticed someone creeping up on their foxholes at night.

An example of this system used in the European theatre, I believe by the 101st and 82nd airborne, was the challenge ‘Thunder!’ to which the correct response was ‘Flash!’.

In the Pacific theatre… the Marines adopted a challenge / response system… where the correct response was ‘Lolapalooza’…

Because native born Japanese speakers are taught a phoneme that is roughly in between and ‘r’ and an ‘l’ … and they very often struggle to say ‘Lolapalooza’ without a very noticable accent, unless they’ve also spent a good deal of time learning spoken English (or some other language with distinct ‘l’ and ‘r’ phonemes), which very few Japanese did in the 1940s.

racist and nsfw historical example of / evidence for this

Now, some people will say this is a total myth, others will say it is not.

My Grandpa who served in the Pacific Theatre during WW2 told me it did happen, though he was Navy and not a Marine… but the other stories about this I’ve always heard that say it did happen, they all say it happened with the Marines.

My Grandpa is also another source for what ‘lolapalooza’ actually means.

If you’ve ever heard Germans try to pronounce “squirrel”, it’s hilarious. I’ve known many extremely bilingual Germans who couldn’t pronounce it at all. It came out sounding roughly like “squall”, or they’d over-pronounce the “r” and it would be “squi-rall”

Sqverrrrl.

Oh yeah, I forgot about how they add a “v” sound to it.

I wonder if any of the Axis even bothered to have such a system to check for Americans.

“Bawn-jehr-no”

I speak Italian first-best.

It does make sense to use a phoneme the enemy dialect lacks as a verbal check. Makes me wonder if there were any in the Pacific Theatre that decided for “Lick” and “Lollipop”.

I’m still puzzled by the idea of what mess this war was if at times you had someone still not clearly identifiable, but that close you can do a sheboleth check on them, and that at any moment you or the other could be shot dead.

Also, the current conflict of Russia vs Ukraine seems to invent ukrainian ‘паляница’ as a check, but as I had no connection to actual ukrainians and their UAF, I can’t say if that’s not entirely localized to the internet.

Have you ever been to a very dense jungle or forest… at midnight?

Ok, now, drop mortar and naval artillery shells all over it.

For weeks, or months.

The holes this creates are commonly used by both sides as cover and concealment.

Also, its often raining, sometimes quite heavily, such that these holes will up with water, and you are thus soaking wet.

Ok, now, add in pillboxes and bunkers, as well as a few spiderwebs of underground tunnel networks, many of which have concealed entrances.

You do not have a phone. GPS does not exist.

You might have a map, which is out of date, and you might have a compass, if you didn’t drop or break it.

A radio is either something stationary, or is the size and weight of approximately, somewhat less than a miniature refrigerator, and one bullet or good piece of shrapnel will take it out of commission.

Ok, now, you and all your buddies are either half starving or actually starving, beyond exhausted, getting maybe an average of 2 to 4 hours of sleep, and you, and the enemy, are covered in dirt, blood and grime.

Also, you and everyone else may or may not have malaria, or some other fun disease, so add shit and vomit to the mix of what everyone is covered in.

Ok! Enjoy your 2 to 8 week long camping trip from hell, in these conditions… also, kill everyone that is trying to kill you, soldier.

It’s weird foot soldiers kept killing each other.

It’s not weird we had ‘frag’ as a verb from the Vietnam war.

Friendly fire incidents are still fairly common even in the modern era…

… ask any Brits deployed to Iraq how they feel about the A-10…

… Pat Tillman was hyped up in the media as an early Iraq War 2 US casualty who died valiantly… when the truth was he was actually killed by friendly fire from his own unit, oh and he actually thought the entire operation in Afghanistan was “fucking illegal”… because Congress is supposed to declare war, not the President…

Even in the RussoUkranian war, right now, in the past few years, there have been tons of incidents of Russians accidentally shooting their own at fairly close range, due to poor coordination, and I’m sure its happened with the Ukranians as well… and thats to say nothing of accidentally drone or arty striking a friendly infantry squad or tank or IFV or what not.

Just go play any modern semi-realistic war game (Squad, Arma 3/Reforger, etc) that doesn’t have a pop up HUD with blue for friend and red for foe, and has friendly fire enabled, and you should be able to see that friendly fire happens all the time with noobs.

…

As for fragging… that term, as it originated in Vietnam, specifically refferred to tossing a fragmentation grenade into an area (often their bunk) where an officer or NCO was.

It was a form of mutiny, essentially, against officers that kept sending men into meat-grinders…

…chewing them out for not maintaining their early M16s which were unreliable as fuck due to being rammed through the production pipeline by McNamara, shoddy quality control from Colt, and everyone just pretending swapping to a new kind of powder in the rounds wouldn’t blow past the designed tolerances of the weapon…

… or just, you know, fuck being drafted into this bullshit war.

In the modern day, ‘frag’ is mostly a gamer term that basically just means ‘killed a guy’, and the origin of that term has been obscured, forgotten.

Look at how they suppressed the christmas truce.

Thanks for sharing

=D

u delet ur account rn

With Reasoning (this is QWEN on hugginchat it says there is Zero)

Biggest threat to humanity

I know there’s no logic, but it’s funny to imagine it’s because it’s pronounced Mrs. Sippy

And if it messed up on the other word, we could say because it’s pronounced Louisianer.

I was gonna say something similar, I have heard a good number of people pronounce Mississippi as if it does have an R in it.

How do you pronounce “Mrs” so that there’s an “r” sound in it?

I don’t, but it’s abbreviated with one.

“His property”

Otherwise it’s just Ms.

Mrs. originally comes from mistress, which is why it retains the r.

Yes but from same source also wife

That came later though, as in “I had dinner with the Mrs last night.”

Yes but it did come, and took place as the common usage. So much so that Ms. Is used to describe a woman both with and without reference to marital status.

I’m down with using Mrs. not to refer to marital status but imo just going with Ms. Is clearer and easier because of how deeply associated Mrs. Is with it.

But no “r” sound.

Correct. I didn’t say there was an r sound, but that it was going off of the spelling. I agree there’s no r sound.

It is going to be funny those implementation of LLM in accounting software

Clever, by putting it in Dutch you never know if it’s a typo or Dutch.

Interesting….troubleshooting is going to be interesting in the future

It’s funny how people always quickly point out that an LLM wasn’t made for this, and then continue to shill it for use cases it wasn’t made for either (The “intelligence” part of AI, for starters)

LLM wasn’t made for this

There’s a thought experiment that challenges the concept of cognition, called The Chinese Room. What it essentially postulates is a conversation between two people, one of whom is speaking Chinese and getting responses in Chinese. And the first speaker wonders “Does my conversation partner really understand what I’m saying or am I just getting elaborate stock answers from a big library of pre-defined replies?”

The LLM is literally a Chinese Room. And one way we can know this is through these interactions. The machine isn’t analyzing the fundamental meaning of what I’m saying, it is simply mapping the words I’ve input onto a big catalog of responses and giving me a standard output. In this case, the problem the machine is running into is a legacy meme about people miscounting the number of "r"s in the word Strawberry. So “2” is the stock response it knows via the meme reference, even though a much simpler and dumber machine that was designed to handle this basic input question could have come up with the answer faster and more accurately.

When you hear people complain about how the LLM “wasn’t made for this”, what they’re really complaining about is their own shitty methodology. They build a glorified card catalog. A device that can only take inputs, feed them through a massive library of responses, and sift out the highest probability answer without actually knowing what the inputs or outputs signify cognitively.

Even if you want to argue that having a natural language search engine is useful (damn, wish we had a tool that did exactly this back in August of 1996, amirite?), the implementation of the current iteration of these tools is dogshit because the developers did a dogshit job of sanitizing and rationalizing their library of data. Also, incidentally, why Deepseek was running laps around OpenAI and Gemini as of last year.

Imagine asking a librarian “What was happening in Los Angeles in the Summer of 1989?” and that person fetching you back a stack of history textbooks, a stack of Sci-Fi screenplays, a stack of regional newspapers, and a stack of Iron-Man comic books all given equal weight? Imagine hearing the plot of the Terminator and Escape from LA intercut with local elections and the Loma Prieta earthquake.

That’s modern LLMs in a nutshell.

You’ve missed something about the Chinese Room. The solution to the Chinese Room riddle is that it is not the person in the room but rather the room itself that is communicating with you. The fact that there’s a person there is irrelevant, and they could be replaced with a speaker or computer terminal.

Put differently, it’s not an indictment of LLMs that they are merely Chinese Rooms, but rather one should be impressed that the Chinese Room is so capable despite being a completely deterministic machine.

If one day we discover that the human brain works on much simpler principles than we once thought, would that make humans any less valuable? It should be deeply troubling to us that LLMs can do so much while the mathematics behind them are so simple. Arguments that because LLMs are just scaled-up autocomplete they surely can’t be very good at anything are not comforting to me at all.

This. I often see people shitting on AI as “fancy autocomplete” or joking about how they get basic things incorrect like this post but completely discount how incredibly fucking capable they are in every domain that actually matters. That’s what we should be worried about… what does it matter that it doesn’t “work the same” if it still accomplishes the vast majority of the same things? The fact that we can get something that even approximates logic and reasoning ability from a deterministic system is terrifying on implications alone.

Why doesn’t the LLM know to write (and run) a program to calculate the number of characters?

I feel like I’m missing something fundamental.

You didn’t get good answers so I’ll explain.

First, an LLM can easily write a program to calculate the number of

rs. If you ask an LLM to do this, you will get the code back.But the website ChatGPT.com has no way of executing this code, even if it was generated.

The second explanation is how LLMs work. They work on the word (technically token, but think word) level. They don’t see letters. The AI behind it literally can only see words. The way it generates output is it starts typing words, and then guesses what word is most likely to come next. So it literally does not know how many

rs are in strawberry. The impressive part is how good this “guessing what word comes next” is at answering more complex questions.But why can’t “query the python terminal” be trained into the LLM. It just needs some UI training.

ChatGPT used to actually do this. But they removed that feature for whatever reason. Now the server that the LLM runs on doesn’t isn’t provide the LLM a Python terminal, so the LLM can’t query it

It doesn’t know things.

It’s a statistical model. It cannot synthesize information or problem solve, only show you a rough average of it’s library of inputs graphed by proximity to your input.

Congrats, you’ve discovered reductionism. The human brain also doesn’t know things, as it’s composed of electrical synapses made of molecules that obey the laws of physics and direct one’s mouth to make words in response to signals that come from the ears.

Not saying LLMs don’t know things, but your argument as to why they don’t know things has no merit.

Oh, that’s why everything else you said seemed a bit off.

The LLM isn’t aware of its own limitations in this regard. The specific problem of getting an LLM to know what characters a token comprises has not been the focus of training. It’s a totally different kind of error than other hallucinations, it’s almost entirely orthogonal, but other hallucinations are much more important to solve, whereas being able to count the number of letters in a word or add numbers together is not very important, since as you point out, there are already programs that can do that.

At the moment, you can compare this perhaps to the Paris in the the Spring illusion. Why don’t people know to double-check the number of 'the’s in a sentence? They could just use their fingers to block out adjacent words and read each word in isolation. They must be idiots and we shouldn’t trust humans in any domain.

The most convincing arguments that llms are like humans aren’t that llm’s are good, but that humans are just unrefrigerated meat and personhood is a delusion.

This might well be true yeah. But that’s still good news for AI companies who want to replace humans – bar’s lower than they thought.

one should be impressed that the Chinese Room is so capable despite being a completely deterministic machine.

I’d be more impressed if the room could tell me how many "r"s are in Strawberry inside five minutes.

If one day we discover that the human brain works on much simpler principles

Human biology, famous for being simple and straightforward.

Ah! But you can skip all that messy biology abd stuff i don’t understand that’s probably not important, abd just think of it as a classical computer running an x86 architecture, and checkmate, liberal my argument owns you now!

Because LLMs operate at the token level, I think it would be a more fair comparison with humans to ask why humans can’t produce the IPA spelling words they can say, /nɔr kæn ðeɪ ˈizəli rid θɪŋz ˈrɪtən ˈpjʊrli ɪn aɪ pi ˈeɪ/ despite the fact that it should be simple to – they understand the sounds after all. I’d be impressed if somebody could do this too! But that most people can’t shouldn’t really move you to think humans must be fundamentally stupid because of this one curious artifact. Maybe they are fundamentall stupid for other reasons, but this one thing is quite unrelated.

why humans can’t produce the IPA spelling words they can say, /nɔr kæn ðeɪ ˈizəli rid θɪŋz ˈrɪtən ˈpjʊrli ɪn aɪ pi ˈeɪ/ despite the fact that it should be simple to – they understand the sounds after all

That’s just access to the right keyboard interface. Humans can and do produce those spellings with additional effort or advanced tool sets.

humans must be fundamentally stupid because of this one curious artifact.

Humans turns oatmeal into essays via a curios lump of muscle is an impressive enough trick on its face.

LLMs have 95% of the work of human intelligence handled for them and still stumble on the last bits.

I mean, among people who are proficient with IPA, they still struggle to read whole sentences written entirely in IPA. Similarly, people who speak and read chinese struggle to read entire sentences written in pinyin. I’m not saying people can’t do it, just that it’s much less natural for us (even though it doesn’t really seem like it ought to be.)

I agree that LLMs are not as bright as they look, but my point here is that this particular thing – their strange inconsistency understanding what letters correspond to the tokens they produce – specifically shouldn’t be taken as evidence for or against LLMs being capable in any other context.

Similarly, people who speak and read chinese struggle to read entire sentences written in pinyin.

Because pinyin was implemented by the Russians to teach Chinese to people who use Cyrillic characters. Would make as much sense to call out people who can’t use Katakana.

Its not a fucking riddle, it’s a koan/thought experiment.

It’s questioning what ‘communication’ fundamentally is, and what knowledge fundamentally is.

It’s not even the first thing to do this. Military theory was cracking away at the ‘communication’ thing a century before, and the nature of knowledge has discourse going back thousands of years.

You’re right, I shouldn’t have called it a riddle. Still, being a fucking thought experiment doesn’t preclude having a solution. Theseus’ ship is another famous fucking thought experiment, which has also been solved.

‘A solution’

That’s not even remotely the point. Yes there are nany valid solutions. The point isn’t to solve it, but what how you solve it says about and clarifies your ideas.

I suppose if you’re going to be postmodernist about it, but that’s beyond my ability to understand. The only complete solution I know to Theseus’ Ship is “the universe is agnostic as to which ship is the original. Identity of a composite thing is not part of the laws of physics.” Not sure why you put scare quotes around it.

For different value sets and use cases, dear.

You might just love Blind Sight. Here, they’re trying to decide if an alien life form is sentient or a Chinese Room:

“Tell me more about your cousins,” Rorschach sent.

“Our cousins lie about the family tree,” Sascha replied, “with nieces and nephews and Neandertals. We do not like annoying cousins.”

“We’d like to know about this tree.”

Sascha muted the channel and gave us a look that said Could it be any more obvious? “It couldn’t have parsed that. There were three linguistic ambiguities in there. It just ignored them.”

“Well, it asked for clarification,” Bates pointed out.

“It asked a follow-up question. Different thing entirely.”

Bates was still out of the loop. Szpindel was starting to get it, though… .

Blindsight is such a great novel. It has not one, not two but three great sci-fi concepts rolled into one book.

One is artificial intelligence (the ship’s captain is an AI), the second is alien life so vastly different it appears incomprehensible to human minds. And last but not least, and the most wild, vampires as a evolutionary branch of humanity that died out and has been recreated in the future.

My a favorite part of the vampire thing is how they died out. Turns out vampires start seizing when trying to visually process 90° angles, and humans love building shit like that (not to mention a cross is littered with them). It’s so mundane an extinction I’d almost believe it.

Also, the extremely post-cyberpunk posthumans, and each member of the crew is a different extremely capable kind of fucked up model of what we might become, with the protagonist personifying the genre of horror that it is, while still being occasionally hilarious.

Despite being fundamentally a cosmic horror novel, and relentlessly math-in-the-back-of-the-book hard scifi it does what all the best cyberpunk does and shamelessly flirts with the supernatural at every opportunity. The sequel doubles down on this, and while not quite as good overall (still exceptionally good, but harder to follow) each of the characters explores a novel and sweet+sad+horrifying kind of love.

Oooh, I didn’t even know it had a sequel!

I wouldn’t say it flirts with the supernatural as much as it’s with one foot into weird fiction, which is where cosmic horror comes from.

Characters in the sequel include a hive-mind of post-science innovation monks, a straight up witch who charges their monastery at the head of a zombie army, and a plotline about finding what the monks think might be god. And that first scene, which is absolute fire btw.

Primary themes include… Well the bit of exposition about needing to ‘crawl off one mountain and cross a valley to reach higher peaks of understanding’, and coping as a mostly baseline human surrounded by superintelligences, ‘sufficiently advanced technology’, etc.

That’s a very long answer to my snarky little comment :) I appreciate it though. Personally, I find LLMs interesting and I’ve spent quite a while playing with them. But after all they are like you described, an interconnected catalogue of random stuff, with some hallucinations to fill the gaps. They are NOT a reliable source of information or general knowledge or even safe to use as an “assistant”. The marketing of LLMs as being fit for such purposes is the problem. Humans tend to turn off their brains and to blindly trust technology, and the tech companies are encouraging them to do so by making false promises.

(damn, wish we had a tool that did exactly this back in August of 1996, amirite?)

Wait, what was going on in August of '96?

Google Search premiered

a much simpler and dumber machine that was designed to handle this basic input question could have come up with the answer faster and more accurately

The human approach could be to write a (python) program to count the number of characters precisely.

When people refer to agents, is this what they are supposed to be doing? Is it done in a generic fashion or will it fall over with complexity?

No, this isn’t what ‘agents’ do, ‘agents’ just interact with other programs. So like move your mouse around to buy stuff, using the same methods as everything else.

Its like a fancy diversely useful diversely catastrophic hallucination prone API.

‘agents’ just interact with other programs.

If that other program is, say, a python terminal then can’t LLMs be trained to use agents to solve problems outside their area of expertise?

I just tested chatgpt to write a python program to return the frequency of letters in a string, then asked it for the number of L’s in the longest placename in Europe.

‘’‘’

String to analyze

text = “Llanfairpwllgwyngyllgogerychwyrndrobwllllantysiliogogogoch”

Convert to lowercase to count both ‘L’ and ‘l’ as the same

text = text.lower()

Dictionary to store character frequencies

frequency = {}

Count characters

for char in text: if char in frequency: frequency[char] += 1 else: frequency[char] = 1

Show the number of 'l’s

print(“Number of 'l’s:”, frequency.get(‘l’, 0))

‘’’

I was impressed until

Output

Number of 'l’s: 16

Yeah it turns out to be useless!

When people refer to agents, is this what they are supposed to be doing?

That’s not how LLMs operate, no. They aggregate raw text and sift for popular answers to common queries.

ChatGPT is one step removed from posting your question to Quora.

But an LLM as a node in a framework that can call a python library should be able to count the number of Rs in strawberry.

It doesn’t scale to AGI but it does reduce hallucinations.

But an LLM as a node in a framework that can call a python library

Isn’t how these systems are configured. They’re just not that sophisticated.

So much of what Sam Alton is doing is brute force, which is why he thinks he needs a $1T investment in new power to build his next iteration model.

Deepseek gets at the edges of this through their partitioned model. But you’re still asking a lot for a machine to intuit whether a query can be solved with some exigent python query the system has yet to identify.

It doesn’t scale to AGI but it does reduce hallucinations

It has to scale to AGI, because a central premise of AGI is a system that can improve itself.

It just doesn’t match the OpenAI development model, which is to scrape and sort data hoping the Internet already has the solution to every problem.

The claim is not that all LLMs are agents, but rather that agents (which incorporate an LLM as one of their key components) are more powerful than an LLM on its own.

We don’t know how far away we are from recursive self-improvement. We might already be there to be honest; how much of the job of an LLM researcher can already be automated? It’s unclear if there’s some ceiling to what a recursively-improved GPT4.x-w/e can do though; maybe there’s a key hypothesis it will never formulate on the quest for self-improvement.

The only thing worse than the ai shills are the tech bro mansplainaitions of how “ai works” when they are utterly uninformed of the actual science. Please stop making educated guesses for others and typing them out in a teacher’s voice. It’s extremely aggravating

You’d still be better off starting with a 50s language processor, then grafting on some API calls.

in what context? LLMs are extremely good at bridging from natural language to API calls. I dare say it’s one of the few use cases that have decisively landed on “yes, this is something LLMs are actually good at.” Maybe not five nines of reliability, but language itself doesn’t have five nines of reliability.

Yes but have you considered that it agreed with me so now i need to defend it to the death against you horrible apes, no matter the allegation or terrain?

Imagine asking a librarian “What was happening in Los Angeles in the Summer of 1989?” and that person fetching you … That’s modern LLMs in a nutshell.

I agree, but I think you’re still being too generous to LLMs. A librarian who fetched all those things would at least understand the question. An LLM is just trying to generate words that might logically follow the words you used.

IMO, one of the key ideas with the Chinese Room is that there’s an assumption that the computer / book in the Chinese Room experiment has infinite capacity in some way. So, no matter what symbols are passed to it, it can come up with an appropriate response. But, obviously, while LLMs are incredibly huge, they can never be infinite. As a result, they can often be “fooled” when they’re given input that semantically similar to a meme, joke or logic puzzle. The vast majority of the training data that matches the input is the meme, or joke, or logic puzzle. LLMs can’t reason so they can’t distinguish between “this is just a rephrasing of that meme” and “this is similar to that meme but distinct in an important way”.

Can you explain the difference between understanding the question and generating the words that might logically follow? I’m aware that it’s essentially a more powerful version of how auto-correct works, but why should we assume that shows some lack of understanding at a deep level somehow?

Can you explain the difference between understanding the question and generating the words that might logically follow?

I mean, it’s pretty obvious. Take someone like Rowan Atkinson whose death has been misreported multiple times. If you ask a computer system “Is Rowan Atkinson Dead?” you want it to understand the question and give you a yes/no response based on actual facts in its database. A well designed program would know to prioritize recent reports as being more authoritative than older ones. It would know which sources to trust, and which not to trust.

An LLM will just generate text that is statistically likely to follow the question. Because there have been many hoaxes about his death, it might use that as a basis and generate a response indicating he’s dead. But, because those hoaxes have also been debunked many times, it might use that as a basis instead and generate a response indicating that he’s alive.

So, if he really did just die and it was reported in reliable fact-checked news sources, the LLM might say “No, Rowan Atkinson is alive, his death was reported via a viral video, but that video was a hoax.”

but why should we assume that shows some lack of understanding

Because we know what “understanding” is, and that it isn’t simply finding words that are likely to appear following the chain of words up to that point.

The Rowan Atkinson thing isn’t misunderstanding, it’s understanding but having been misled. I’ve literally done this exact thing myself, say something was a hoax (because in the past it was) but then it turned out there was newer info I didn’t know about. I’m not convinced LLMs as they exist today don’t prioritize sources – if trained naively, sure, but these days they can, for instance, integrate search results, and can update on new information. If the LLM can answer correctly only after checking a web search, and I can do the same only after checking a web search, that’s a score of 1-1.

because we know what “understanding” is

Really? Who claims to know what understanding is? Do you think it’s possible there can ever be an AI (even if different from an LLM) which is capable of “understanding?” How can you tell?

I’m not convinced LLMs as they exist today don’t prioritize sources – if trained naively, sure, but these days they can, for instance, integrate search results, and can update on new information.

Well, it includes the text from the search results in the prompt, it’s not actually updating any internal state (the network weights), a new “conversation” starts from scratch.

Yes that’s right, LLMs are context-free. They don’t have internal state. When I say “update on new information” I really mean “when new information is available in its context window, its response takes that into account.”

That’s not true for the commercial ai’s. We don’t know what they are doing

Just if you were a hater that would be cool with me. I don’t like “ai” either. The explanations you give are misleading at best. It’s embarrassing. You fail to realise the fact that NOBODY KNOWS why or how they work. It’s just extreme folly to pretend you know these things. It’s been observed to reason novel ideas which is why it is confusing for scientists that work with them why it happens. It’s not just data lookup. You think entire Web and history of man fits in 8 gb? You are just educating people with just your basic rage filled opinion, not actual answers. You are angry at the discovery, we get that. You don’t believe in it. Ok. But don’t say you know what it does and how, or what openai does behind its closed doors. It’s just embarrassing. We are working on papers to try to explain the emergent phenomenon we discovered in neural nets that make it seem like it can reason and output mostly correct answers to difficult questions. It’s not in the “data” and it looks for it. You could just start learning if you want to be an educator in the field.

So, what is ‘understanding’?

If you need help, you can look at marx for an answer that still mostly holds up, if your server is an indication of your reading habbits.

oh does he have a treatise on the subject?

He’s said some relevant stuff

nice

Can we say for certain that human brains aren’t sophisticated Chinese rooms…

Yes.

It’s marketed like its AGI, so we should treat it like AGI to show that it isn’t AGI. Lots of people buy the bullshit

AGI is only a benchmark because it gets OpenAI out of a contract with Microsoft when it occurs.

You can even drop the “a” and “g”. There isn’t even “intelligence” here. It’s not thinking, it’s just spicy autocomplete.

Barely even spicy.

There are different types of Artificial intelligences. Counter-Strike 1.6 bots, by definition, were AI. They even used deep learning to figure out new maps.

If you want an even older example, the ghosts in Pac-Man could be considered AI as well.

By this logic any solid state machine is AI.

These words used to mean things before marketing teams started calling everything they want to sell “AI”

No. Artificial Intelligence has to be imitating intelligent behavior - such as the ghosts imitating how, ostensibly, a ghost trapped in a maze and hungry for yellow circular flesh would behave, and how CS1.6 bots imitate the behavior of intelligent players. They artificially reproduce intelligent behavior.

Which means LLMs are very much AI. They are not, however, AGI.

No, the logic for a Pac Man ghost is a solid state machine

Stupid people attributing intelligence to something that is probably not is a shameful hill to die on.

Your god is just an autocomplete bot that you refuse to learn about outside the hype bubble

Okay but if i say something from outside the hype bubble then all my friends except chatgpt will go away.

Also chatgpt is my friend and always will be, and it even told me i don’t have to take the psych meds that give me tummy aches!

Okay, what is your definition of AI then, if nothing burned onto silicon can count?

If LLMs aren’t AI, then absolutely nothing up to this point probably counts either.

since nothing burned into silicon can count

Oh noo you called me a robot racist. Lol fuck off dude you know that’s not what I’m saying

The problem with supporters of AI is they learned everything they know from the companies trying to sell it to them. Like a 50s mom excited about her magic tupperware.

AI implies intelligence

To me that means an autonomous being that understands what it is.

First of all these programs aren’t autonomous, they need to be seeded by us. We send a prompt or question, even when left alone to its own devices it doesn’t do anything until it is given an objective or reward by us.

Looking up the most common answer isn’t intelligence, there is no understanding of cause and effect going on inside the algorithm, just regurgitating the dataset

These models do not reason, though some do a very good job of trying to convince us.

As far as I’m concerned, “intelligence” in the context of AI basically just means the ability to do things that we consider to be difficult. It’s both very hand-wavy and a constantly moving goalpost. So a hypothetical pacman ghost is intelligent before we’ve figured out how to do it. After it’s been figured out and implemented, it ceases to be intelligent but we continue to call it intelligent for historical reasons.

What if I told you agi is made up by the same people that misuse ai

Yes but then we built a weapon with with to murder truth, and with it meaning, so everything is just vibesy meaning-mush now. And you’re a big dumb meanie for hating the thing that saved ys from having/being able to know things. Meanie.

Maybe they should call it what it is

Machine Learning algorithms from 1990 repackaged and sold to us by marketing teams.

Hey now, that’s unfair and queerphobic.

These models are from 1950, with juiced up data sets. Alan turing personally sid a lot of work on them, before he cracked the math and figured out they were shit and would always be shit.

Fair lol

Alan Turing was the GOAT

RIP my beautiful prince

Also, thank you for being basically a person. This topic does a lot to convince me those aren’t a thing.

His politics weren’t perfect, but he got more nazis killed than a lot of people with much worse takes, and was a genuinely brilliant reasonably ethical contributor to a lot of cool shit that should have fucking stayed cool.

Machine learning algorithm from 2017, scaled up a few orders of magnitude so that it finally more or less works, then repackaged and sold by marketing teams.

Adding weights doesn’t make it a fundamentally different algorithm.

We have hit a wall where these programs have combed over the totality of the internet and all available datasets and texts in existence.

There isn’t any more training data to improve with, and these programs have stated polluting the internet with bad data that will make them even dumber and incorrect in the long run.

We’re done here until there’s a fundamentally new approach that isn’t repetitive training.

Okay but have you considered that if we just reduce human intelligence enough, we can still maybe get these things equivalent to human level intelligence, or slightly above?

We have the technology.

Also literally all the resources in the world.

Transformers were pretty novel in 2017, I don’t know if they were really around before that.

Anyway, I’m doubtful that a larger corpus is what’s needed at this point. (Though that said, there’s a lot more text remaining in instant messager chat logs like discord that probably have yet to be integrated into LLMs. Not sure.) I’m also doubtful that scaling up is going to keep working, but it wouldn’t surprise that much me if it does keep working for a long while. My guess is that there’s some small tweaks to be discovered that really improve things a lot but still basically like like repetitive training as you put it. Who can really say though.

then continue to shill it for use cases it wasn’t made for either

The only thing it was made for is “spicy autocomplete”.

Turns out spicy autocomplete can contribute to the bottom line. Capitalism :(

So could tulip bulbs, for a while.

I would say more “blackpilling”, i genuinely don’t believe most humans are people anymore after dealing with this.

Fair point, but a big part of “intelligence” tasks are memorization.

Computers for all intents are purposes have perfect recall so since it was trained on a large data set it would have much better intelligence. But in reality what we consider intelligence is extrapolating from existing knowledge which is what “AI” has shown to be pretty shit at

They don’t. They can save information on drives, but searching is expensive and fuzzy search is a mystery.

Just because you can save a mp3 without losing data does not mean you can save the entire Internet in 400gb and search within an instant.

Which is why it doesn’t search within an instant and it uses a bunch of energy and needs to rely on evaporative cooling to stop overheating the servers

It’s all about weamwork 🤝

deleted by creator

The end is never the end The end is never the end The end is never the end The end is never the end The end is never the end The end is never the end The end is never the end The end is never the end

Ah a fellow stanley parable enjoyer, love to see it!

*the end is never the end is never the end

weamwork is my new favorite word, ahahah!

You’re asking about a double-U. Double means two. I think AI reasoned completely correct.

Now ask how many asses there are in assassinations

Man AI is ass at this

*laugh track*

ohh god, I never through to ask reasoning models,

DeepSeekR17b was gold too

then 14b, man sooo close…

And people are trusting these things to do jobs / parts of jobs that humans used to do.

Humans are pretty dumb sometimes lol

It’s far better at the use of there, their, and they’re.

The average US citizen couldn’t craft a professional sounding document of their life depended on it.

It’s not better than a professional at anything, The average human is far below that bar.

I like the way you worded that a lot

I wonder how QWEN 3.0 performs cause it surpasses Deepseek apparently

I don’t have any other models pulled down, if they’re open I’ll try it and respond back here

Alr

It did quite well for this.

Oh god, the asses multiplied 🤣🤣🤣

It’s painful how Reddit that is…

So,

Now,

Alright,

Probably where 90% of the training for this particular problem came from.

deleted by creator

Worked well for me

What is that font bro…

Its called sweetpea and my sweatpea picked it out for me. How dare I stick with something my girl picked out for me.

But the fact that you actually care what font someone else uses is sad

Chill bro it’s a joke 💀. It’s like when someone uses comic sans as a font.

Ohh.

You’re shrodingers douchebag

Got it

Nice Rs.

I understand its probably more user friendly, but yet I still somehow find myself dissapointed the answers weren’t indexed from zero. Was this LLM written in MATLAB?

Most users aren’t used to zero index so they would most likely think there was a problem with it haha

Is this ChatGPT o3-pro?

ChatGPT 4o

One of the interesting things I notice about the ‘reasoning’ models is their responses to questions occasionally include what my monkey brain perceives as ‘sass’.

I wonder sometimes if they recognise the trivialness of some of the prompts they answer, and subtilly throw shade.

One’s going to respond to this with ‘clever monkey! 🐒 Have a banana 🍌.’

I really like checking these myself to make sure it’s true. I WAS NOT DISAPPOINTED!

(Total Rs is 8. But the LOGIC ChatGPT pulls out is ……. remarkable!)

“Let me know if you’d like help counting letters in any other fun words!”

Oh well, these newish calls for engagement sure take on ridiculous extents sometimes.

I want an option to select Marvin the paranoid android mood: “there’s your answer, now if you could leave me to wallow in self-pitty”

Here I am, emissions the size of a small country, and they ask me to count letters…

Lol someone could absolutely do that as a character card.

This is deepseek model right? OP was posting about GPT o3

Yes this is a small(ish) offline deepseek model

Try with o4-mini-high. It’s made to think like a human by checking its answer and doing step by step, rather than just kinda guessing one like here

What is this devilry?

How many times do I have to spell it out for you chargpt? S-T-R-A-R-W-B-E-R-R-Y-R

We are fecking doomed!

I asked it how many Ts are in names of presidents since 2000. It said 4 and stated that “Obama” contains 1 T.

Toebama

We gotta raise the bar, so they keep struggling to make it “better”

My attempt

0000000000000000 0000011111000000 0000111111111000 0000111111100000 0001111111111000 0001111111111100 0001111111111000 0000011111110000 0000111111000000 0001111111100000 0001111111100000 0001111111100000 0001111111100000 0000111111000000 0000011110000000 0000011110000000Btw, I refuse to give my money to AI bros, so I don’t have the “latest and greatest”

Tested on ChatGPT o4-mini-high

It sent me this

0 0 0 1 1 1 1 1 0 0 0 0 0 0 0 0 0 0 1 1 1 1 1 1 1 1 0 0 0 0 0 0 0 0 1 1 1 1 1 1 1 0 0 0 0 0 0 0 0 1 1 1 1 1 1 1 1 1 1 0 0 0 0 0 0 1 1 1 1 1 1 1 1 1 1 1 0 0 0 0 0 0 1 1 1 1 1 1 1 1 1 0 0 0 0 0 0 0 0 1 1 1 1 1 1 1 0 0 0 0 0 0 0 0 1 1 1 1 1 1 0 0 0 0 0 0 0 0 0 1 1 1 1 1 1 1 1 1 1 0 0 0 0 0 1 1 1 1 1 1 1 1 1 1 1 1 0 0 0 0 1 1 1 1 1 1 1 1 1 1 1 1 0 0 0 0 1 1 1 1 1 1 1 1 1 1 1 1 0 0 0 0 1 1 1 1 1 1 1 1 1 1 1 1 0 0 0 0 0 0 1 1 1 0 0 1 1 1 0 0 0 0 0 0 0 1 1 1 0 0 0 0 1 1 1 0 0 0 0 0 1 1 1 1 0 0 0 0 1 1 1 1 0 0 0 0I asked it to remove the spaces

0001111100000000 0011111111000000 0011111110000000 0111111111100000 0111111111110000 0011111111100000 0001111111000000 0011111100000000 0111111111100000 1111111111110000 1111111111110000 1111111111110000 1111111111110000 0011100111000000 0111000011100000 1111000011110000I guess I just murdered a bunch of trees and killed a random dude with the water it used, but it looks good

I just murdered a bunch of trees and killed a random dude with the water it used, but it looks good

Tech bros: “Worth it!”

It’s a pretty big problem, but as long as governments don’t do shit then we’re pretty much fucked.

Either we take the train and contribute to the problem, or we don’t but get left behind, and end up being the harmed one.

but as long as governments don’t do shit then we’re pretty much fucked

Story of the last few decades (or centuries since I don’t know history too well)

Yup

I don’t get it

It used to reply 2 until this new upgrade. But now after 14 min the new update give you the right answer

interesting

I’m not involved in LLM, but apparently the way it works is that the sentence is broken into words and each word has assigned unique number and that’s how the information is stored. So LLM never sees the actual word.

Adding to this, each word and words around it are given a statistical percentage. In other words, what are the odds that word 1 and word 2 follow each other? You scale that out for each word in a sentence and you can see that LLMs are just huge math equations that put words together based on their statistical probability.

This is key because, I can’t emphasize this enough, AI does not think. We (humans) anamorphize them, giving them human characteristics when they are little more than number crunchers.

Not words but tokens, strawberry could be the tokens ‘straw’ and ‘berry’, but it could also be ‘straw’, ‘be’ and ‘rry’

deleted by creator

The joke is that it took 14 mins to give that answer

ah

AI is amazing, we’re so fucked.

/s

Unironically, we are fucked when management think AI can replace us. Not when AI can actually replace us.

Deep reasoning is not needed to count to 3.

It is if you’re creating ragebait.

“A guy instead”