- cross-posted to:

- [email protected]

- cross-posted to:

- [email protected]

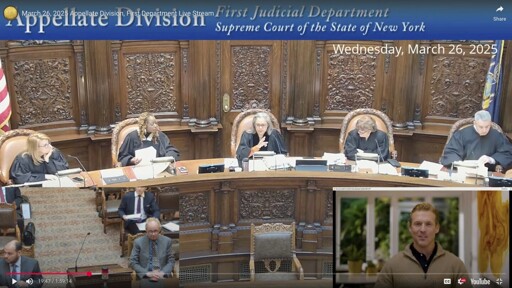

The latest bizarre chapter in the awkward arrival of artificial intelligence in the legal world unfolded March 26 under the stained-glass dome of New York State Supreme Court Appellate Division’s First Judicial Department, where a panel of judges was set to hear from Jerome Dewald, a plaintiff in an employment dispute.

On the video screen appeared a smiling, youthful-looking man with a sculpted hairdo, button-down shirt and sweater.

“May it please the court,” the man began. “I come here today a humble pro se before a panel of five distinguished justices.”

“Ok, hold on,” Manzanet-Daniels said. “Is that counsel for the case?”

“I generated that. That’s not a real person,” Dewald answered.

It was, in fact, an avatar generated by artificial intelligence. The judge was not pleased.

So he wasn’t using AI to make the argument, just to speak his own words, because he mumbles.

While he should have identified this properly, this is the least offensive use of AI generated video I can imagine.

Did you even watch the video. The judge specifically calls him out for lying about a speech issue cause he’s had several conversations upto that point without issue, and she’s not mad about using a AI video but mad that he’s trying to promote some scam AI grift business of his by using her courtroom as free publicity

What’s really funny is its what AI should be used for.

Yeah, I unfortunately get why the court had to stop it because they were blindsided by it and this guy didn’t think through all the potential problems with this (the big one that occurs to me now is what happens if the AI video says something and the client claims it’s an inaccurate representation of their wishes, how’s a court supposed to figure out if the AI is messing up or if the client is lying, but there’s probably a dozen more someone who reads statutes and opinions all day could think of), but this seems like an innocent mistake from a pro se litigant who was trying to come up with a reasonable accomodation for their disability and just didn’t do it correctly, so I hope this doesn’t prejudice his case at all.

In this specific case, I believe he fed it the words. AI generated video, and text to speech, sort of thing.

But I could absolutely see it happening as you describe.

The article reads like the guy gave the AI avatar a script to read rather than having the AI avatar generate its own argument. I doubt the plaintiff would have referred to it as prerecorded or readily admit it was an ai avatar if he intended for this thing to argue on his behalf rather than just speak on his behalf.

I really dont see a problem with this.

Yeah, if the situation is as the article implies then there is absolutely no issue, but if I was running a court I would want to put a pause on things and review source code or get sworn testimony from someone who built it first to be on the absolute safe side. Like, if something did go wrong it would be kind of hard to un-hear that and not allow it to influence the ultimate outcome of things.

deleted by creator